Your startup has a prototype web application running on a desktop computer. Now it’s time to make it available to the general public. It would need to serve millions of users from all over the world — eventually. But for now you’re more concerned of giving the best experience possible to users while keeping costs under control.

But deploying a web application for the general public has its own set of problems:

- How do you ensure quick load times to a geographically diverse audience?

- How do you keep tuning your databases and ensuring its availability when faced with an ever-increasing volume?

- How do you ensure backups are available should errors occur – both human and computer problems?

- How do you archive old data? Do you need to comply with regulatory requirements that you maintain customer data for a certain number of years?

- What about disaster recovery? Resilience? Undersea cable damages?

- How to keep your users’ data secure – both in transit and at rest? You wouldn’t want your company to be a source of a data breach, would you?

- How do you control access to your production environments – more so as your technical team grows?

Wouldn’t it be great if you and your staff can just focus on building your app – developing its features and taking care of its users — and just outsource everything else that is not unique to your startup?

Yes you can.

I’ll show you how you can architect a web application that can scale from a few concurrent users into millions. With this in place, you can focus on working on the apps’ features and your operations people need only to take care of your users. You can buy infrastructure in a pay-as-you-go model, increase capacity as the user base grows and shrink them as needed if your market is seasonal.

For this example I’ll be using services from AWS for illustrative purposes. However you should be able to adapt the reference architecture to just about any other cloud provider of your choosing.

Architecture Overview

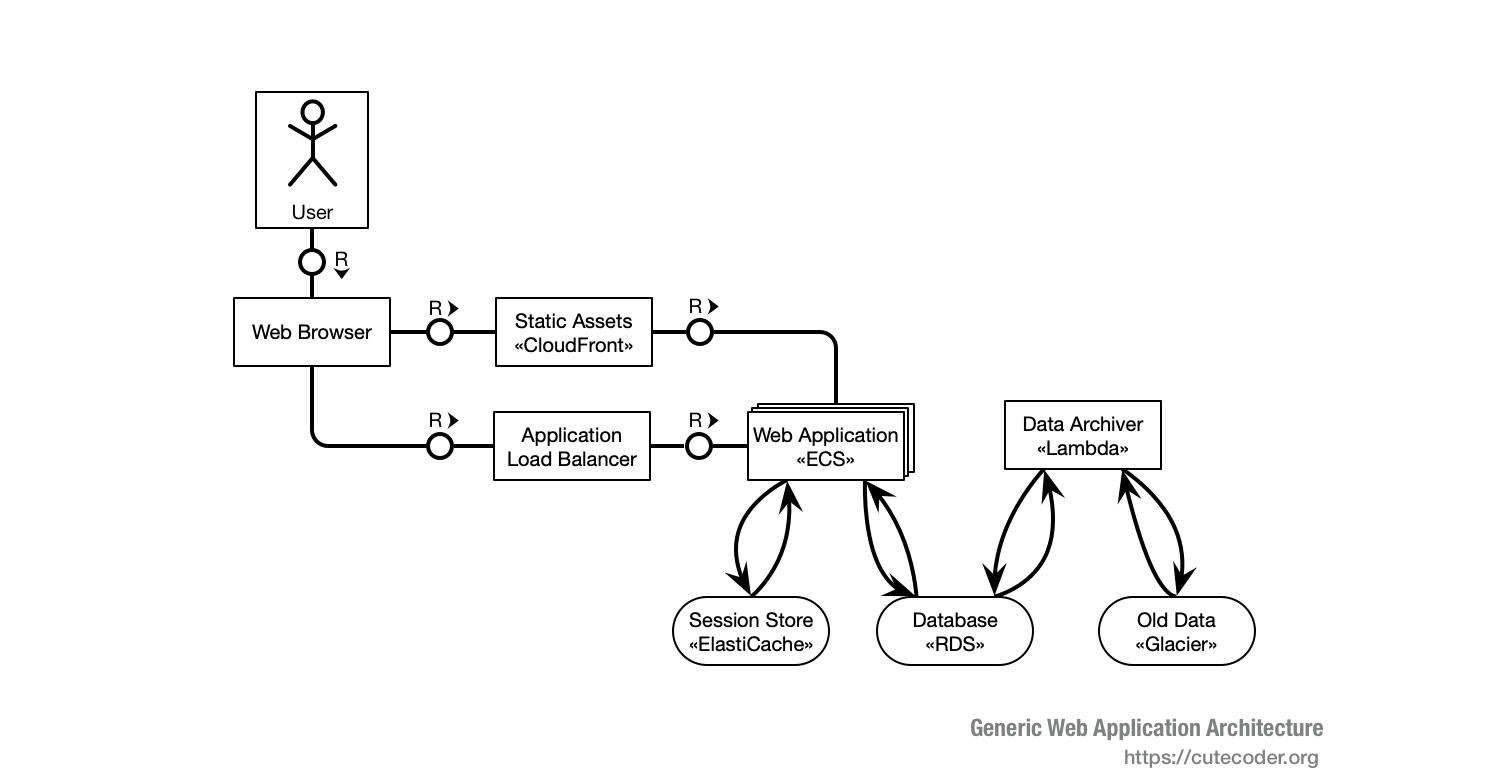

The following is a TAM block diagram describing the general components of the application and how they are interconnected. This is a “standard” web application architecture designed for horizontal scaling.

Here’s how you typically deploy such web application on AWS:

- The web application would be deployed as Amazon Elastic Container Service (ECS) instances.

- Static Assets are served through Amazon CloudFront.

- The user’s web browser accesses web application instances through Application Load Balancer.

- Web session data are stored in Amazon ElastiCache.

- Databases are managed by Amazon Relational Database Service (RDS).

- Long-term archives are stored via Amazon Glacier.

- The archiving business logics are run by AWS Lambda.

As you can see, the architecture doesn’t impose any specific programming language that you can use – you can use PHP, Java, or even Swift to develop your web application. However there are a few constraints that you need to follow:

- The web application must be able to run under Docker.

- The web framework must be able to externalize session data into a remote storage.

Being able to run under Docker also means that it needs to run under some flavors of Linux. If it can’t run on Linux, then your options for cloud providers would be severely limited. The app should not also not modify itself (i.e. changes its own scripts or application files) as some cloud providers would re-set the app’s file system upon restart.

The web application should also be stateless – it should not maintain user-specific data in its memory beyond a single request/response cycle. Any session data should be externalized into a remote service – typically Redis or Memcached. Externalizing sessions is mandatory for horizontal scaling – since the next request from the user can be handled by another instance of the application, which in turn can run on a different hardware altogether.

Architecture Challenges Solved

These are some of the challenges solved by the reference architecture described above.

Scaling to Unforeseen Demands

You would need to add capacity as the product gain traction. However you wouldn’t want to over-spend on infrastructure too early in the life cycle.

Wouldn’t it be great if you can add servers as you need them and remove them should there be a reduction in demand – including hiring and firing server admins that comes with them?

Fortunately all AWS services recommended above are scaled on-demand. You can add capacity as you go and remove them in case there s a need for doing so. Most of those services described above also comes the people-power needed to support them – hence you don’t need to hire and fire sysadmins yourself.

Keeping costs under control

Buying too much infrastructure to soon would probably stifle your startup’s cash flow. Hence I’d figure you have a concern on keeping infrastructure costs under control.

What if you can easily determine how much infrastructure that your application needs at any given point of the product lifecycle?

AWS provides extensive low-capacity free tiers that you can use for development. Better yet, use this free tier as the staging and performance testing landscape. Use it to load-test your application and collect performance metrics such that you can estimate the application’s per-user capacity requirements. That is measure how many concurrent users – through load testing – that a “basic” tier application and database instance can support before performance starts to degrade significantly. Use this figure to map out your infrastructure growth needs.

Disaster Recovery

Whether cloud or bare-metal servers, you would need to provision for unforeseen disasters — data center outages, submarine cable disruptions, or other issues external or internal to your application. As the adage says, “What can go wrong, will go wrong” and you should be prepared for most things that can go wrong.

Wouldn’t it be great that your users would continue to use your application even during crises like server problems, denial of service attack, or even programming errors? What if you can have all this and yet pay no money towards idle capacity?

AWS solves this with multiple availability zones — distinct physical locations in which your instances would be run. You can configure this as active/active disaster recovery servers — in other words your backup landscapes would also serve users and not just a cost overhead. Thus should there be a severe problem in one availability zone, your users will not see the outage – only a slight degradation of performance.

Database Maintenance and Configuration

Configuring, tuning, maintaining databases is a challenge that may not be unique to your company. These requires specialized skills that your company may not have on-board.

What if the database administrator (DBA) comes with the database itself? What if you can hire and fire DBAs as needed?

Amazon RDS is a fully-managed database-as-a-service. The service includes backups, failover, software updates, and much more. The company guarantees a monthly uptime of 99.95% (less than 22 minutes downtime per month) of the service. DigitalOcean also provides a competing service.

User Latency

When you target users located on different continents, latency (including page load-times) may be an issue. Data cannot travel faster than the speed of light – and if it needs to cross many switches, routers, and several internet service providers (ISPs), data transfer times can really slow your site down.

Ideally you can teleport right next to your user with your server at hand to hook directly to their computers so that there’s almost zero latency since the app runs pretty much locally.

A Content Delivery Network (CDN) is the next best thing as wiring directly to your users’ laptops. Companies providing CDN services partners with many ISPs around the world to pre-distribute files to the latter’s servers. Therefore when your users access your website’s static files, they’re not really loading it from your servers but from their ISPs instead (or another ISP adjacent to it). This vastly improves overall page load times since these files do not need to traverse the world to reach your users’ browser. CloudFront is AWS’ CDN product. Akamai is a competitor and have been doing CDN since 1998.

Load Distribution

Running an application on multiple instances raises the issue of utilizing all of them effectively. Furthermore should an instance crashes, other instances should take over its work without missing a beat – you wouldn’t want a downtime, would you. Not to mention you need to ensure that your disaster recovery landscape also serve users and not just sitting idle burning money.

What if you can have zero downtime for your application? What if you can update your application’s code without causing outage to your users? What if you can make the disaster recovery landscape also work to serve users and not just sitting idle?

The Application Load Balancer takes care of distributing requests evenly to instances of the application. It works by distributing individual web requests (working at OSI Layer 7) with oodles of routing rules that you can customize. AWS’ Elastic Load Balancing also provides a connection-level (OSI Layer 4) load balancer should you need it.

Fault Tolerance

Bad things could happen. Your app (or its runtime) could have a memory leak, ran out of memory and crashes. Pointer problems could also lead to crashes. Maybe deadlocks happen and the app freezes. In a bare-metal server, this would cause outage to your users.

Wouldn’t it be great if your app can recover by itself when it is stuck or stumble? At least do so temporarily while you are working on a longer-term fix?

Running web applications in ECS ensures that failed instances are restarted automatically. Furthermore the containerized configuration ensures that application files are re-set back into its original state upon restart, hence minimizing the impact of security intrusions to those files.

Placing application instances behind an Application Load Balancer allows it to direct user requests only to healthy instances. Non-functioning instances are taken out of the pool as it is being restarted.

Furthermore maintaining user session in ElastiCache allows seamless fail-over of user requests between instances.

Data Security

As far as security is concerned, there are two kinds of data: data in transit and data at rest. In transit means that it is flowing through some kind of communication link and susceptible to interception attacks (i.e. eavesdropping or man-in-the-middle attacks). Whereas data at rest is about storage – databases, files, hard drives, and tapes.

Your customer trusts their data to you – to keep them safe from prying eyes as well as making sure that it is not compromised (i.e. undesirable alteration). You need to win this trust every day. Unfortunately going “cloud” means more moving parts and thus more things to secure and lock down.

AWS provides an option for you to encrypt all data at rest using the AES-256 industry standard. Furthermore RDS handles authentication and transparent encryption/decryption of your data without needing you to change your database clients. You can configure SSL to secure data in transit between components of your applications. AWS provide SSL root certificates for all its regions.

Access Control to Production Landscapes

As your team grow, as you hire (and maybe fire) people, you need to make sure that everyone only have access to the systems that they need to do their work. Similarly as roles change you would need to revoke access as well. This is to protect both your company as well as your employees from being targets for blames when excrements happen.

AWS Identity and Access Management enables you to create users and groups which control accesses to various resources. This enables separation of roles in your organization to map directly to separation of access.

Archival Strategy

The cost of keeping data online grows as the volume of data grows. However some legal conditions may require you to keep data for a certain number of years – even after they are no longer actively used.

This is solved by removing some data from your application’s operational databases and storages to move it into a low-cost data store. In turn since these are low-cost storage, you would need to retrieve them only if there are out-of-the-ordinary legal requirements to do so.

AWS Glacier provides low-cost long-term storage of infrequently-accessed data. This is perfect for your archival strategy. Furthermore you could automate the archival processes via serverless scripts deployed as AWS Lambda functions. By using lambdas to run the archival logic, you don’t need to pay for infrastructure when the process is not running.

Environment Replication

More often than not, you would need several copies of your production environment – be it for on-going development, integration testing, performance testing, even ad-hoc copies to simulate peculiar problems occurring in production. As environment differences can create hard-to-find bugs, you’d like these replicas to mirror the production environment as much as possible.

What if you could easily freeze-dry and clone all of your servers? Wouldn’t it be handy for that “hard-to-simulate” issues? Wouldn’t that makes educating new hires easier since they can play around with a full replica of the live system?

AWS OpWorks Stack enable you to create copies of the entire landscape at the click of a button. Simply model your architecture as a Stack and creating a new environment is as simple as point and click.

Any Other Options besides AWS?

The key points of this reference architecture are:

- Managed services

- Horizontal scalability

Most of the components needed in a server-side stack are generic (i.e. “off-the-shelf”). Being a standard software you could delegate its operations to someone else, ideally those who are more expert than you. Operations of such software or component would involves tuning, patching (i.e. security updates), and a minimum uptime guarantee. Outsourcing these frees your staff’s bandwidth to focus on the things that are unique to your company. In the architecture described above, most components that are off-the-shelf are managed by AWS – in other words you would outsource the operation of these standard components to AWS’ staff in which you pay an all-inclusive pricing.

Horizontal scalability means you add more instances as capacity demands grow. As the number of concurrent users increase you add more ECS instances as needed. Therefore if the demand decreases you can respond by reducing the number of instances. Other components such as databases are scaled up or down in a similar manner.

Thus when you are considering to host your services in another cloud provider, consider these two factors and how your alternative provider can satisfy them. Remember that when your company is just starting, it makes sense to outsource just about everything to the experts except the few parts that are unique to it — the organization’s core competence which drives business value. Even the third most-popular website (Reddit) do not host their own servers.

Next Steps

If you haven’t dockerize your web application, try and see what changes are required for it to run under Docker. This is the first step to prepare the application to run in a cloud container – be it ECS, Cloud Foundry, or Heroku. Be sure to demarcate which files that the application can modify (i.e. data files) apart from those of the application’s runtime code — this is probably more relevant for interpreted languages such as Python or PHP and less so for Swift or Java.

Next, start splitting static assets (i.e. images, style sheets, and client-side JavaScript) from your web app’s code base (hence Docker container) and see if you can serve them from an alternate domain. See if your HTML template code can be configured to source these files dynamically from an attribute of the client. This would prepare your application to serve them from a CDN, without prematurely committing to one.

Finally, sign up to one or more cloud providers and deploy your web app on their lowest tiers to test feasibility. Many cloud providers provide a free tier for development purposes so that you can try them out. Be sure to fully utilize this offering if its available.

0 thoughts on “Architecting a Scalable Web Application”