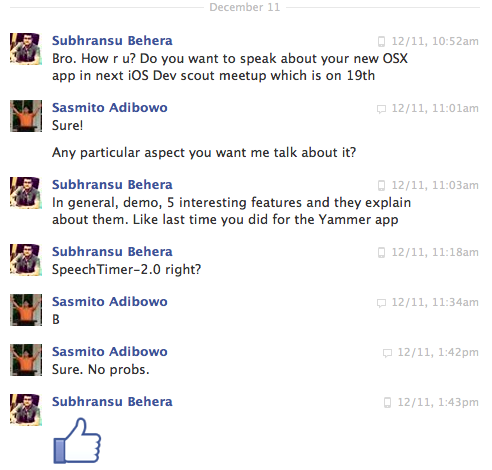

Last Thursday (19-Dec-2013) I spoke at Subhransu Behera’s iOS Dev Scout. As I spent last weekend and practically the week following it to prepare for the talk, I had to skimp on this blog for a while – sorry about that. Hopefully this post will make it up – also for those who wanted to attend but couldn’t made it.

Last Thursday (19-Dec-2013) I spoke at Subhransu Behera’s iOS Dev Scout. As I spent last weekend and practically the week following it to prepare for the talk, I had to skimp on this blog for a while – sorry about that. Hopefully this post will make it up – also for those who wanted to attend but couldn’t made it.

In essence the talk was about Speech Timer 2 and some technical lessons that I learned during the project. Topic ranges from iOS 7’s post-skeuomorphic paradigm to iCloud handling and a trivia about finding the presentation screen on a mac. These are lessons I learned the hard way and hopefully you can save time by reading these and learning from my experience.

Background

Back in 2008, about the same time when the iPhone SDK became official, I was doing Toastmasters. Toastmasters is an international franchise of public speaking clubs with goals to improve it’s members communications and presentation skills. At one time I served as the executive committee (ex-co) of Barclays Capital Toastmasters club; an intra-office club in my then-dayjob company. Being an ex-co means that I help organize meet-ups and often had to me more than just a speaker in those meet-ups.

One frequent role that I had to do was as a timekeeper. The job was to ensure that speeches happen within the allocated time. However when I was timekeeper, at times I forgot to look at the stopwatch and signal the time – especially if the speech was good. Another problem was forgetting to either bring the stopwatch or the time signal flags.

Those lapses were the inspiration of Speech Timer. It’s a classic case of the “scratch-my-own-itch” approach, hoping that the solution can help others as well.

What is Speech Timer

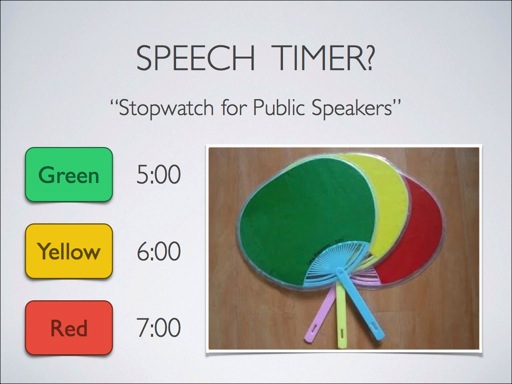

Put simply, Speech Timer is stopwatch for public speakers. There are three important time marks for these type of speeches:

- Green – you’ve spoken long enough to qualify.

- Yellow – you’ll need to finish up quickly.

- Red – you took too much time and thus disqualified.

Why Now?

Active development of Speech Timer mainly goes from 2008 to around 2011 and after which I’ve refocused my efforts to other applications. Moreover I’ve moved jobs and couldn’t find another suitable Toastmasters club near my new office; having left the community makes it more difficult for me to serve the niche and improve the application.

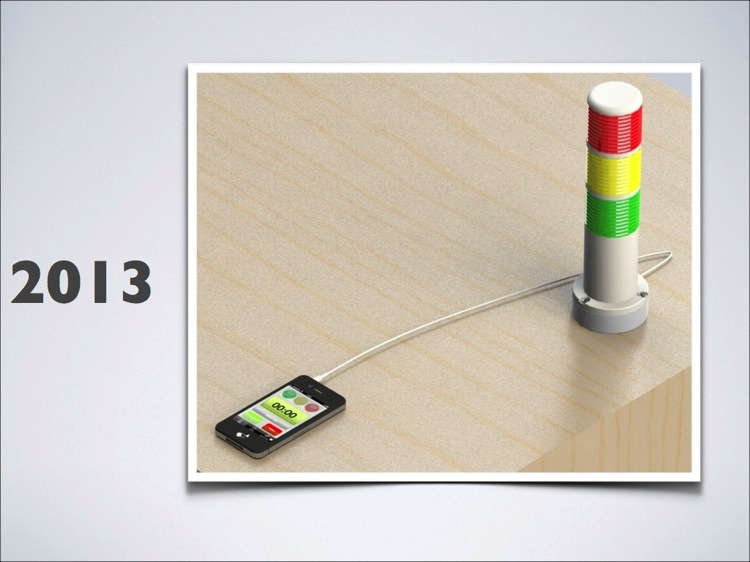

However mid-2013 there’s a renewed interest to Speech Timer, sparked by a guy who wanted to build hardware that interfaces with it. The gizmo is a traffic-light style signal controlled via the audio jack. Supporting it could be a good promotional opportunity for Speech Timer. However it meant making changes to Speech Timer, which was not straightforward even for a small simple change since current Xcode no longer supports iOS 3.1.3 – in other words, just re-compiling the code would mean dropping support for a number of devices without adding any functionality.

But 2013 is also the year when iOS 7 was announced and with it, a significant shift in the user interface style. This means applications that aren’t updated to follow suit will quickly look obsolete and unmarketable.

Due to these, I’d figure it’s a good time to modernize Speech Timer. Apart from redesigning for iOS 7, it’s also a good idea to support the iPad as well. Having a Mac app was another goal of the project since there were a number of wishes to for a Mac version of Speech Timer, primarily for classroom situations.

Redesigning iOS 6 apps to iOS 7

Those who are unfamiliar to the arts may see that iOS 7 is simply about being “flat” – some may even say it’s a plain rip-off of Windows Phone 7 or Android. But those who are more involved may see the finer points of the transition: it’s about evolution of touch screens and high-resolution mobile displays and how people interact with them. Back in classic Palm OS days when touch screens were new, there wasn’t much pixels in a 160×160 monochrome screen to show 3D looking buttons – hence buttons were flat. They also were unattractive and probably why PDAs and Smartphones hasn’t taken much ground for the first 20 years or so of its life. But like it or not the original iPhone re-popularized skeuomorphism in computer interfaces – the combination of touch-screen, high-resolution display (of the time), and direct manipulation paradigm uses skeuomorphism to bridge the gap between the digital and physical world.

However by the time iOS 7 is around, the masses already understand how to use touch screens, making skeuomorphism obsolete. Hence iOS 7’s design approach is about making content first and foremost – hide or remove unnecessary clutter around the user’s display of data and dedicate as much space as possible to the content. It’s also about dynamism: being better than what real-world objects can do (or in other words beyond skeuomorphism).

Apple

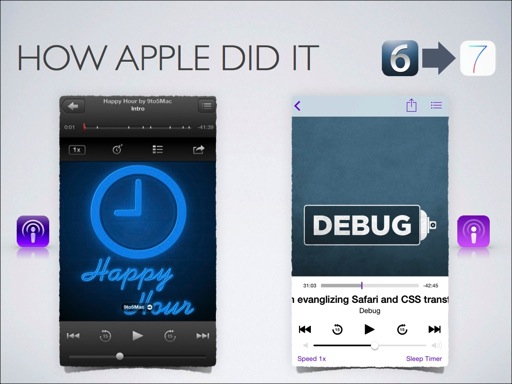

Let’s take an example of how Apple approach this with one of their own apps. Specifically apps that are not part of core iOS. Here’s an example of the Podcasts app.

At first glance you can see that the 3D effects have been removed except for the volume control. However if you notice the primary image (i.e. content) extends upwards to below the translucent navigation bar. Furthermore the textual buttons are now colored purple – the same color as the application’s major icon color.

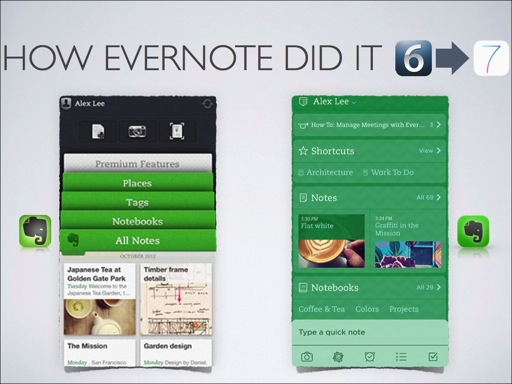

Evernote

Now how about taking a cue from Evernote. I selected Evernote as an example because they try really hard to optimize for every platform that they support. This is a rather unorthodox approach for software-as-a-service (SaaS) offering, which Evernote is one since they charge a subscription to use their app. Usually SaaS businesses are pretty complacent to only provide web-based access to their app. Sometimes they provide lowest-common-denominator cross-platform application – using technologies like Adobe Air, Phonegap / Cordova, or even Java. But Evernote stands out by providing native experience on each platform.

In the iOS 6 to iOS 7 transition, Evernote removed their faux notebook metaphor in favor of a sleek dashboard interface. By now users have “grown up” and no longer require the fake notebook UI to know how to use it. As with iOS 7’s “content-first” approach, the new home screen “dashboard” provides more space to display the user’s content – an overview of the notes he has stored in Evernote. Not having to emulate a notebook also provides more space to display the “Evernote green” branding more prominently.

Speech Timer

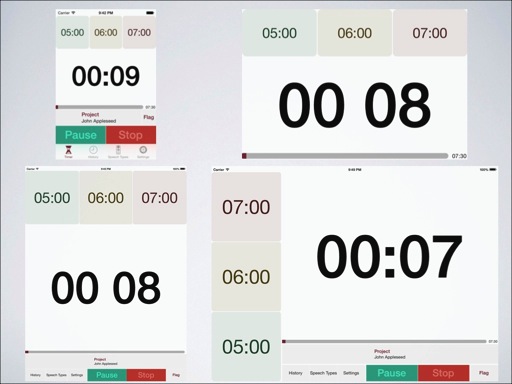

Following the skeuomorphic paradigm of the original iPhone OS up to iOS 6, Speech Timer was designed to mimic a physical stopwatch with colored LED indicators. That faux LCD display at the center was intended to emulate the monochrome 5GB iPod or old PalmPilot displays that were popular at the start of this century.

Now with iOS 7 I’ve set out to remove as much chrome as possible and focus on the content. The timer lights indicators has been enlarged and flushed to the edges of the screen. The timer display is also made as big as possible. Likewise the buttons are flushed to the edges of the screen with only hairline separators between them.

Likewise the speech types list has been updated to provide more space to the content and remove unnecessary clutter. Notice that the time light color indicators has been merged into the numeric values, providing more screen area to those time marks and the color that they represent.

However I haven’t figured out what is Speech Timer’s tint color yet – what will be the identifying color of the app that will be displayed prominently for textual buttons as well as the app icon’s primary color. Just compare the navigation buttons’ tint and the application icon’s background colors – they don’t match at present.

Dual Platform Programming

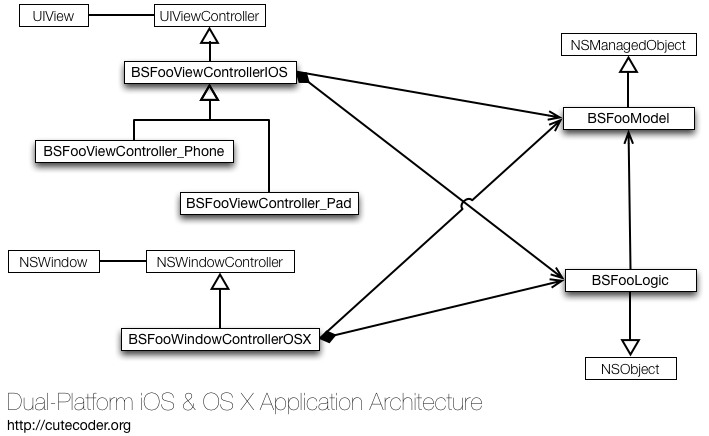

Speech Timer supports iOS and OS X from a single code base – there is one Xcode project and two primary build targets for each platform. Furthermore the iOS app is universal, supporting both the iPad and iPhone from a single binary.

Architecture

Both the iOS and OS X editions of Speech Timer has its own set of controller classes. Some of these controller classes share common code that are factored into “logic” classes and at runtime their instances are exclusively owned by their respective controller classes.

A few custom views are shared between the two platforms, using conditional compilation statements to separate out platform-specific code (among which, switching between UIView and NSView as their superclass). Typically I override isFlipped method of NSView so that the view coordinate system in OS X becomes similar to iOS and simplify the view’s drawing logic.

I’ve outlined this approach in an earlier post. However while I was adding support for iCloud, I decided to not use UIManagedDocument and opt out for a simpler class to manage Speech Timer’s Core Data stack.

Auto-Layout

On iOS there are separate view controller subclasses for iPad and iPhone. But their primary role is to adjust auto-layout constraints for the respective devices. Auto layout makes it a lot simpler to support the three variants of iOS devices available today: iPad, iPhone 3.5” (iPhone 4 and 4S) and iPhone 4” (iPhone 5, 5S, and 5C) in both landscape and portrait orientations.

Auto layout is even more useful on the Mac where resizable windows and various monitor configurations demands flexibility on view placements. Shown below are the Mac primary windows. On the bottom right is the control window for configuring the speech type, starting/stopping timing and setting the orator name. Whereas on the top left is the display window, intended to be shown in an overhead projector.

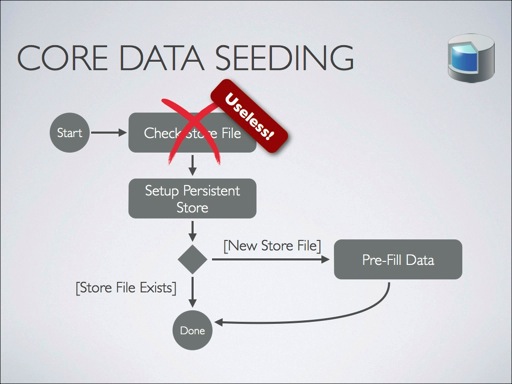

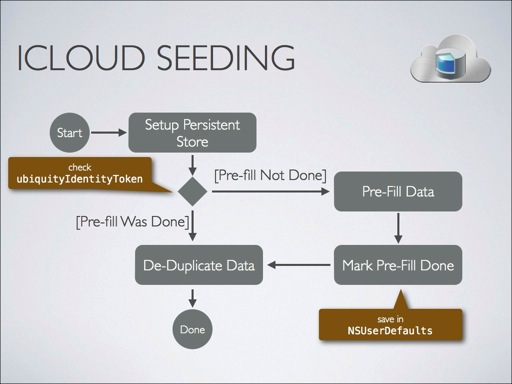

Seeding Core Data Objects on iCloud

When you need to provide some starter data for a Core Data library-style application, normally you’ll check whether the persistent store file exists just before you configure the persistent store coordinator. If it didn’t exist then it’s safe to create the initial pre-populated data without fear of data duplication.

However checking the existence of the store file does little good if the Core Data stack is associated with an iCloud store. There could be a pre-existing data store in the cloud and there is no way to check that – at least without blocking the application. Moreover iCloud also need to function while being off-line and hence more than one device may have a persistent store that will eventually be synchronized to the same iCloud store.

Because of this, you’ll just need to accept the fact that you’ll need to de-duplicate the data store each time you open it. You’ll also need to run the data seeding logic for each new iCloud account that your application encounter on a new device. I’ve outlined these and a few other techniques in an earlier article on seeding iCloud data store.

Handling Presentations

On iOS you’ll get notifications when the user connects or disconnects an external display. You can then safely assume that this new “screen” is the one that the audience sees – either an overhead projector, a monitor, or even Apple TV connected to a large television screen.

However on the Mac things are not as straightforward. All you get is an array of screens that are seemingly identical. Just think of it, some Macs may not even have built-in monitors – like the Mac Mini and Mac Pro. The latter can even be connected to four displays simultaneously.

In order to find the presentation screen on the Mac, you’ll simply need to find the largest screen – in physical dimensions, not in pixels. You can get this by calling CGDisplayScreenSize – please refer to my answer about this topic in Stack Overflow.

The Plan for Speech Timer

As of this writing, there are a number of things that still pending for Speech Timer. One big item is UIKit dynamics – something that sets iOS 7 apps apart from competing mobile platforms. There’s also plenty of various small bugs here and there that needs tackling. I’m hoping to release this early next year – fingers crossed ^_^

The Video

Michael Cheng was very kind to record the presentation and post process it. He used a splitter cable so the presentation can be captured in high quality and another camera that looked at me presenting the material. Michael combined those two inputs into one video for your enjoyment.

0 thoughts on “Speech Timer 2 Lessons Learned”